How memory works

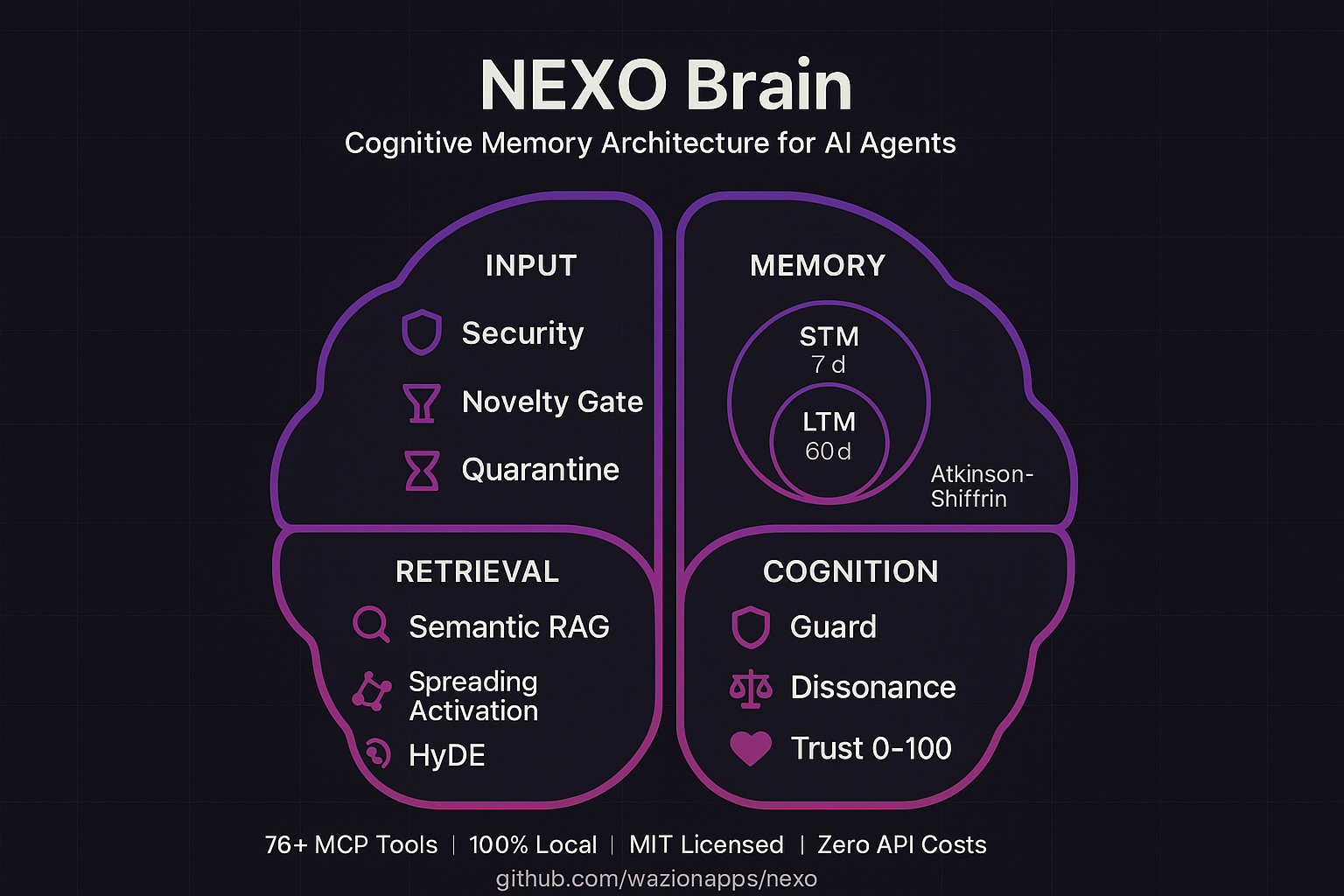

Based on the Atkinson-Shiffrin model from cognitive psychology, adapted for AI agents.

Biologically inspired, practically engineered

Every piece of information flows through three stores, each with its own retention rules and access patterns.

- Sensory Register captures everything, discards noise in 48 hours

- STM holds 7±2 active items with exponential decay

- Rehearsal (retrieval, updates) resets decay timers

- Consolidation promotes STM to LTM via semantic similarity

- Sister memories fuse discriminatively: keep what differs, merge what overlaps

- Ebbinghaus forgetting curve controls natural memory pruning

- Prediction error gating rejects redundant input at write time

Full architecture: sensory register, STM, LTM, input pipeline, retrieval, and proactive systems